Shane Wighton, the designer behind the well-known basketball hoop that won’t let you miss, has created a system for obstacle detection that uses the new iPad’s built-in LIDAR scanner.

The LIDAR system works by taking regular readings of a room by sending out tiny pulses of light at targets and measuring the time it takes to return a reflection. In this way, a scan can be taken of an entire room to accurately determine the location of objects and obstructions.

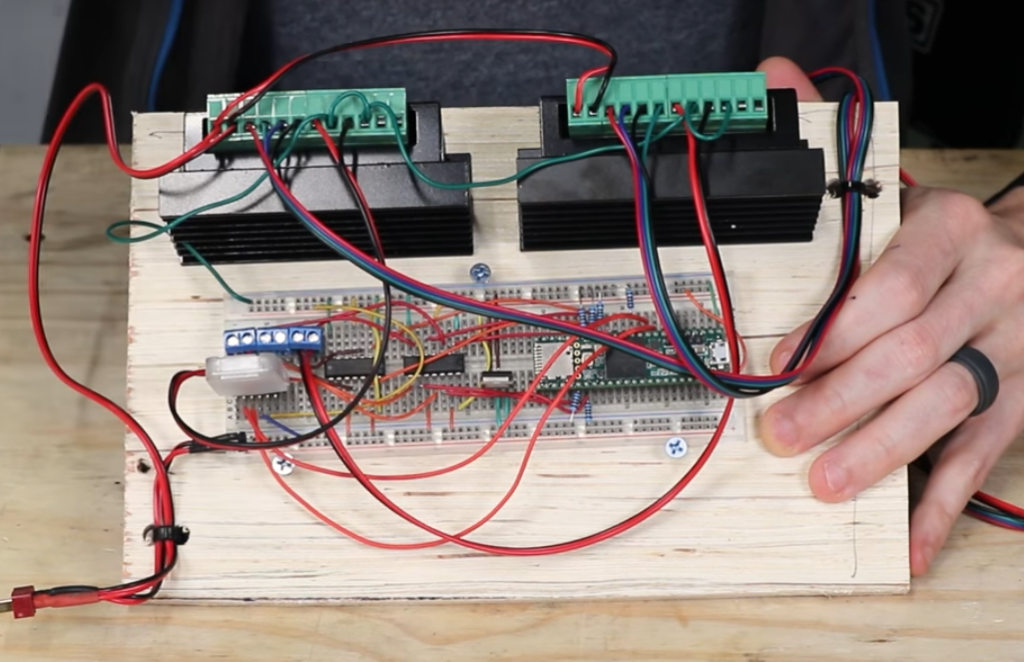

Wighton used the technology to make an app which not only harnesses data from the LIDAR scans at regular intervals but visualizes the data as an augmented reality overlay color coded to show the relative distance of objects. When paired with a custom 3D-printed tactile interface that attaches to the back of the iPad, the data can be translated through a mechanism that depresses or exposes a set of pins as an indicator of obstacle presence. In a video posted to YouTube, Wighton discusses his design process including how he decided to use the iPad’s LIDAR system and how he built the tactile feedback mechanism which uses two stepper motors driven by a Teensy 3.6. He also discusses the parts of the project he feels could be improved as well as his hopes for future iterations, especially if LIDAR were to be released for the iPhone. You can view Wighton’s other projects on his website and his YouTube channel Stuff Made Here.